Table of Contents

- Introduction to Data Loading and Cleansing

- Types of Data Loading

- Initial Loading

- Subsequent Loading

- Update Loading Strategies

- Trickle/Continuous Feed

- Incremental Load

- Full Refresh

- Choosing the Right Loading Strategy

- Understanding Data Cleansing

- Reasons for Dirty Data

- Types of Data Anomalies

- Syntactically Dirty Data

- Semantically Dirty Data

- Coverage Anomalies

- Handling Missing Records

- Key-Based Classification of Problems

- Primary Key Problems

- Non-Primary Key Problems

- Steps in Data Cleansing

- Automatic Data Cleansing Techniques

- ETL vs. ELT: Understanding the Difference

- Conclusion

Introduction to Data Loading and Cleansing

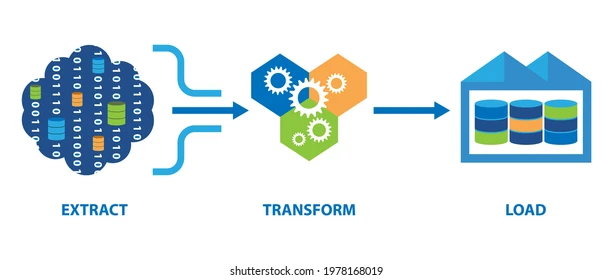

Data Loading and Cleansing is a critical part of the ETL (Extract, Transform, Load) workflow that ensures the information entering a data warehouse is trustworthy, consistent, and ready for analytical use. During ETL, large volumes of information flow in from multiple operational systems, legacy databases, spreadsheets, and web-based sources—each with different formats, structures, and data quality levels. If this raw information is loaded without proper preparation, organizations may face serious consequences such as misleading analytics, flawed dashboards, inaccurate forecasting, and reduced decision-making confidence.

A robust ETL setup carefully moves information from source systems, checks it for errors, standardizes formats, removes duplicates, handles missing fields, and harmonizes conflicting values. This preparation process prevents issues like inconsistent customer records, outdated transaction details, mismatched identifiers, and incomplete entries from reaching business intelligence tools. Clean and well-structured data supports executives, analysts, and automated models in generating insights that are reliable, actionable, and aligned with organizational goals.

Moreover, maintaining a disciplined approach to preparation and quality control significantly improves operational efficiency. Teams spend less time fixing errors manually, reports become more accurate, and analytics pipelines perform faster. Ultimately, strong ETL practices create a solid foundation for high-quality reporting, predictive analytics, machine learning, regulatory compliance, and strategic decision-making in any modern data-driven enterprise.

ETL processes involve three major stages:

- Extract – Collecting data from multiple heterogeneous sources.

- Transform – Converting extracted data into a consistent and usable format.

- Load – Writing the processed data into the target data warehouse or database.

Types of Data Loading

Initial Loading

Initial loading is the process of loading data into a data warehouse for the first time. This typically involves migrating historical data from legacy systems, flat files, or other operational systems into a staging area before transferring it to the target tables.

Subsequent Loading

Subsequent loading, also called incremental loading, is performed after the initial load to update the data warehouse with new or modified records. This ensures that the warehouse stays current without reloading all data from scratch.

Update Loading Strategies

Trickle/Continuous Feed

This strategy continuously collects data and performs row-level insert and update operations. It is suitable for environments where near real-time updates are required.

Incremental Load

Incremental loading applies ongoing changes in periodic batches. This approach balances performance and data freshness, making it ideal for medium to high update rates without affecting operational systems.

Full Refresh

A full refresh completely erases existing table content and reloads it with fresh data. It is recommended for small tables or tables with large changes each refresh. Best practices include:

- Removing referential integrity constraints temporarily.

- Using shadow tables to avoid impacting ongoing query workloads.

Choosing the Right Loading Strategy

The choice depends on trade-offs among:

- Data Freshness – How up-to-date your warehouse data must be.

- Performance – Speed of loading and transformation.

- Data Volatility – Frequency of changes in source data.

For example, low update rates and high real-time availability may require trickle feeds, whereas high update rates with delayed availability may favor incremental loads or full refresh strategies.

Understanding Data Cleansing

Data cleansing ensures that the data loaded into the warehouse is accurate, consistent, and usable. Dirty data can lead to wrong analytics and poor business decisions.

Reasons for Dirty Data

Some common causes of dirty data include:

- Dummy or placeholder values.

- Missing or null data.

- Multipurpose fields.

- Contradicting records.

- Inappropriate use of address lines.

- Reused primary keys or non-unique identifiers.

- Data integration issues across multiple systems.

Types of Data Anomalies

Syntactically Dirty Data

- Lexical Errors – Mismatch between stored values and expected format.

- Irregularities – Non-uniform units (e.g., salaries in USD vs PKR).

Semantically Dirty Data

- Integrity constraint violations.

- Contradictory business rules.

- Duplicate records causing inconsistencies.

Coverage Anomalies

- Missing Attributes – Data omitted during collection.

- Missing Records – Null values in NOT NULL columns due to equipment or human errors.

Handling Missing Records

Strategies include:

- Dropping records with missing critical data.

- Filling missing values manually or using statistical methods (mean, median).

- Using global constants or the most probable values based on patterns.

Key-Based Classification of Problems

Primary Key Problems

- Same primary key with different data.

- Same entity with different keys across systems.

- Primary key format inconsistencies.

Non-Primary Key Problems

- Blank required fields.

- Erroneous or incomplete data.

- Null values in critical fields.

Steps in Data Cleansing

- Parsing – Identify and separate individual data elements.

- Correcting – Fix errors using algorithms or secondary sources.

- Standardizing – Apply business rules to convert data into a consistent format.

- Matching – Identify duplicates within and across datasets.

- Consolidating – Merge matched records into a single representation.

Automatic Data Cleansing Techniques

- Statistical Methods – Detect outliers using mean, standard deviation, etc.

- Pattern-Based Methods – Identify inconsistencies based on common patterns in datasets.

- Clustering – Group records using distance-based algorithms to find anomalies.

- Association Rules – Records not following high-confidence rules are flagged as outliers.

ETL vs. ELT: Understanding the Difference

- ETL (Extract, Transform, Load): Transformations occur on a separate server before loading into the warehouse.

- ELT (Extract, Load, Transform): Raw data is loaded first; transformations occur within the database using SQL or Python.

Combining both strategies is possible depending on performance, data volume, and processing requirements.

Conclusion

Data Loading and Cleansing are the backbone of any successful ETL process, ensuring that the information entering a data warehouse is accurate, consistent, and analytics-ready. Without strong loading strategies and effective cleansing techniques, even the most advanced data warehouse can produce misleading insights, resulting in poor decision-making and operational inefficiencies.

By selecting the right loading strategy—whether it’s trickle feed, incremental loading, or a full refresh—organizations can balance performance, data freshness, and system stability. Additionally, understanding and addressing common data issues such as syntactic errors, semantic inconsistencies, missing values, and key-related anomalies helps maintain a trustworthy data environment.

Modern businesses also benefit from automated cleansing techniques like statistical analysis, clustering, pattern detection, and association rules, which significantly reduce manual effort and improve overall data quality. When combined with a scalable ETL or ELT framework, these approaches ensure that data transformation workflows remain efficient and resilient as data volumes grow.

Ultimately, mastering Data Loading and Cleansing empowers organizations to build reliable data warehouses, support accurate reporting, enhance business intelligence systems, and create a solid foundation for advanced analytics, machine learning, and AI-driven insights. Companies that invest in high-quality ETL processes gain a long-term competitive advantage in today’s data-driven world.